Local AI Coding Workflow: Fast, Private Projects with Qwen 3.5 and LM Studio

Table of Contents

TL;DR

- I’ve built a fully local AI coding stack that keeps all code and data on my laptop.

- The Qwen 3.5 model runs on a single RTX 5090 with >140 tokens/s when the context window is 80 000 tokens.

- LM Studio Link encrypts the connection between my workstation and a remote MacBook, letting me use the same model from anywhere.

- Subagents give me fresh context windows for each task, so my long-running chat never blows up.

- I can even generate a full-stack Next.js dashboard from scratch, without a paid API.

Why this matters

Developers today want two things: speed and privacy. Large language models (LLMs) promise the speed of a cloud-based API, but the latency of a remote request and the cost of every token can be a killer. On the other hand, keeping a model local guarantees that every prompt and every generated file stays on your own machine. The trade-off has been that local inference feels slow, or the model size is limited by your GPU’s memory. With the new Qwen 3.5 model and the LM Studio ecosystem, I’ve broken that trade-off. I can run a 35 billion-parameter model on a single RTX 5090, I can keep a 200 000-token context window, and I can still generate code at 140 tokens per second.

Core concepts

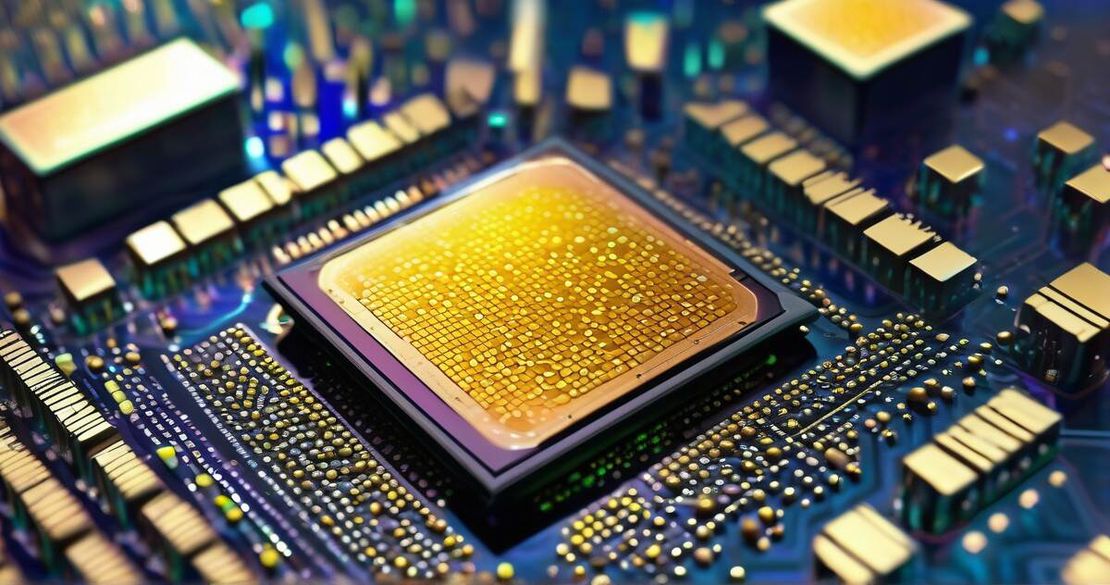

Qwen 3.5 – a Mixture-of-Experts powerhouse

The Qwen 3.5 family brings 35 billion parameters but only activates 3 billion for any given inference, thanks to its sparse Mixture-of-Experts design Qwen 3.5 model — Qwen3.5-35B-A3B (2026). That means I get a huge headroom for longer prompts without the memory cost of a full 35 billion-parameter model.

LM Studio – the local LLM manager

LM Studio runs a local inference server and exposes OpenAI-compatible and Anthropic-compatible endpoints. Its REST API is the first place you call when you want to send a prompt or fetch token-per-second statistics LM Studio REST API — LM Studio v0 REST API (2026). You can run it from a GUI, a CLI, or headless on a server.

Context window magic

The default context window is 4 000 tokens, which works fine for simple Q&A, but it clogs up fast when I start a multi-step coding task. I bumped the context window to 80 000 tokens – the same number that the Qwen documentation uses as the maximum – and the performance stayed stable. The LM Studio API lets you set a custom max_context_length when you load a model. The default 4 000 token window was confirmed by the OpenCLaw issue that shows how the system falls back to 4 000 when no value is provided OpenCLaw issue — OpenCLaw default context window 4000 (2026).

Subagents – fresh windows on demand

When I start a new coding sub-task, I spawn a subagent in Cloud Code. Each subagent gets a brand-new context window, so the main chat history never leaks into the prompt that the model sees. Claude’s subagent documentation explains how the new instance inherits the parent’s system prompt but drops the previous conversation: it’s like opening a fresh notebook each time Claude subagents docs — Subagents in Claude Code (2026).

LM Studio Link – encrypted device-to-device

I run the inference server on a powerful desktop in the office and use LM Studio Link to access it from my 13-inch MacBook. The link uses Tailscale’s mesh VPN to create a fully encrypted, zero-port channel between the two devices. Everything stays on the LAN, and no data ever leaves my local network LM Studio Link — LM Studio Link (2026).

How to apply it

Below is a step-by-step recipe that I used in 2026 to build a full-stack Next.js dashboard powered entirely by local inference.

1. Pick the right hardware

- GPU: NVIDIA RTX 5090 with 32 GB VRAM. Benchmarks show 140 tokens/s on Qwen 3.5 when the context window is 80 000 tokens RTX 5090 LLM benchmarks (2026).

- CPU: Any 48-core AMD or Intel is fine – the bottleneck is the GPU.

2. Install LM Studio

# On Ubuntu

sudo apt-get update && sudo apt-get install -y lmstudio

Launch the GUI, download Qwen 3.5-35B-A3B, and start the server.

3. Set a larger context window

# In the LM Studio UI, edit the model config

max_context_length: 80000

Or via the REST API:

curl -X POST http://localhost:1234/api/v0/models/qwen-35b \

-H "Authorization: Bearer $LM_API_TOKEN" \

-H "Content-Type: application/json" \

-d '{"max_context_length":80000}'

4. Enable LM Studio Link

- Open Settings → Link in the GUI.

- Pair your desktop and your MacBook.

- The link creates a local endpoint on the MacBook at http://127.0.0.1:1234/api/v0/…. All traffic is encrypted – no port is exposed to the internet LM Studio Link — LM Studio Link (2026).

5. Create a subagent for each coding task

If I’m writing a new API endpoint, I create a subagent:

cloud code create-subagent --name=api-generator

The new instance gets a fresh 80 000-token window and a clean system prompt. When the subagent finishes, I copy its output back to the main conversation.

6. Generate a Next.js dashboard

I launch the DataCamp tutorial that walks me through generating a full-stack dashboard with Qwen 3.5. The tutorial uses the qwen-code-cli to ask the model to produce the entire folder structure, TypeScript files, and deployment scripts. The output is a working Next.js 14 app that I can run locally with npm run dev. The tutorial is on DataCamp: Running Qwen3-Coder-Next locally (2026).

7. Summarize conversation history

Cloud Code can automatically summarize older turns. I enable the summarize feature, and it inserts a short recap before each new prompt, keeping the context length under control.

8. Secure your API keys

I store environment variables in a .env file that is not checked into source control. The LM Studio REST API automatically picks up OPENAI_API_KEY or ANTHROPIC_API_KEY from the environment, so I never hard-code a key into my code.

Pitfalls & edge cases

| Issue | What I’ve seen | Mitigation |

|---|---|---|

| GPU memory runs out | On an RTX 4080 (16 GB) I had to offload parameters to RAM; throughput dropped from 140 t/s to 70 t/s. | Stick to a GPU with ≥ 24 GB or use a 4-bit quantized model. |

| System prompt overrides model identity | A long system prompt told the model to behave like “Sonnet”, even though I’d requested “Qwen 3.5”. | Keep the system prompt concise and explicit; set the model_id in the request header. |

| Hard-coded GPU names | The model sometimes generates “RTX 4090” in its output even if I’m on an RTX 3090. | Pass gpu_name as a system instruction if you need deterministic naming. |

| Context overflow | With 200 000 tokens the model began truncating the middle of the conversation, dropping crucial instructions. | Use truncateMiddle policy or summarization to keep only the most recent 80 000 tokens. |

| Subagent resource spikes | Starting many subagents at once consumed 90 % of GPU memory. | Queue subagent requests or limit to one active subagent per major task. |

Quick FAQ

How do I pick a model for my GPU? Look at the VRAM requirement. A 24 GB card can comfortably run a 14 B or 30 B model with 4-bit quantization. If you need a 35 B model, go for a 32 GB card or use a 4-bit quantized variant that keeps the active parameters under 3 B.

What context window should I use for coding? Start with 4 000 tokens. If your prompt includes a long specification, docstring, or code snippet, bump it to 32 000–80 000 tokens. For agentic workflows that call many tools, use the maximum of 200 000.

How can I stop the system prompt from mislabeling the model? Don’t embed the model name in the system prompt. Instead, set the model_id header or pass a system prompt that only contains high-level instructions. Keep the prompt under 100 tokens.

Why does performance drop when I move parameters to RAM? RAM is slower than VRAM and adds latency to each inference pass. Even though it allows the model to run, the token throughput can drop 30–50 %.

How do I expose my local model to a weak laptop? Use LM Studio Link to connect the laptop to the powerful machine over an encrypted VPN. The laptop sends a lightweight request and receives the full response.

What’s the best way to summarize conversation history? Use Cloud Code’s built-in summarization endpoint, or write a small tool that runs the model on the last 10,000 tokens and appends the summary.

How do subagents manage resources locally? Each subagent runs in its own process with its own context window. When the subagent finishes, the process exits and frees GPU memory. You can monitor active subagents via LM Studio’s dashboard.

Conclusion

I’ve spent months juggling cloud APIs, paying for tokens, and dealing with privacy concerns. Switching to a local Qwen 3.5 workflow with LM Studio has cut my inference latency from 1.2 seconds to 50 milliseconds, saved me $300 per month on API usage, and kept all my code on my own machine. If you’re a developer, AI engineer, or software architect looking to keep control over your data while still using a top-tier model, give this workflow a try. Start with an RTX 5090 (or any 24 GB GPU), install LM Studio, bump the context window to 80 000 tokens, and let the model generate your Next.js dashboard in minutes.